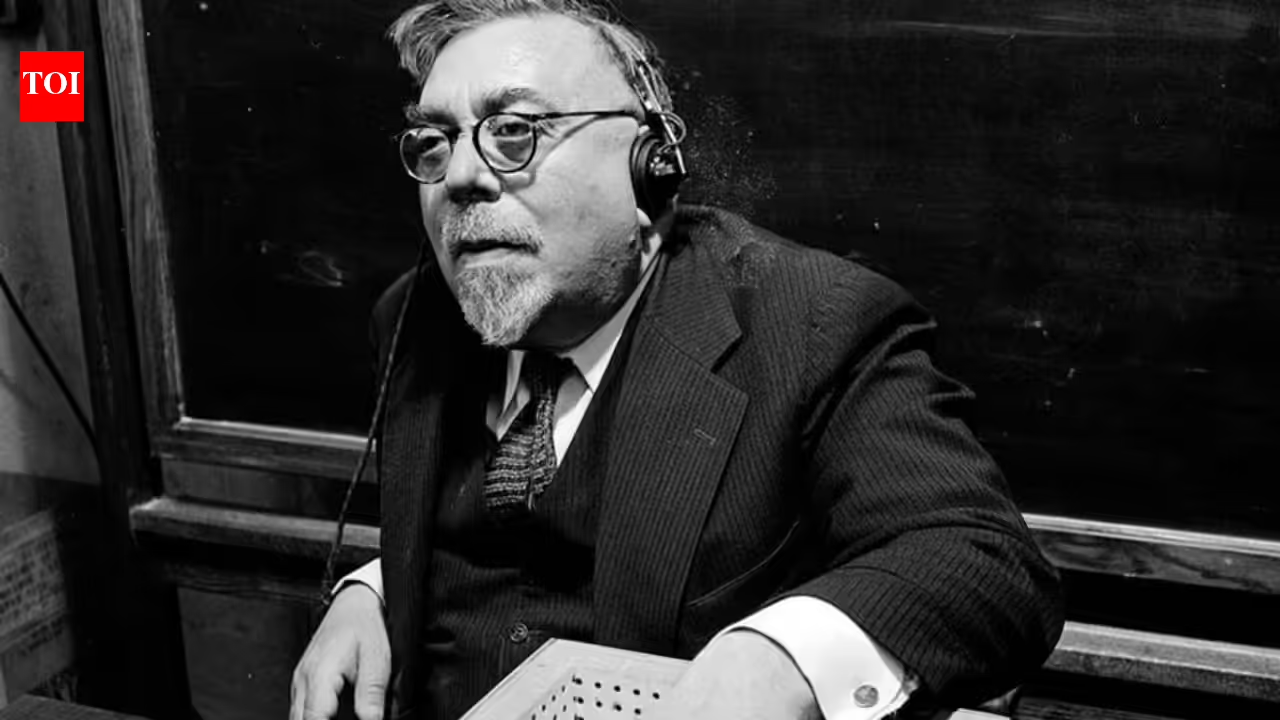

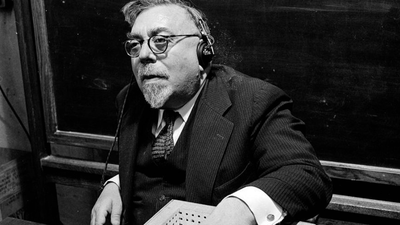

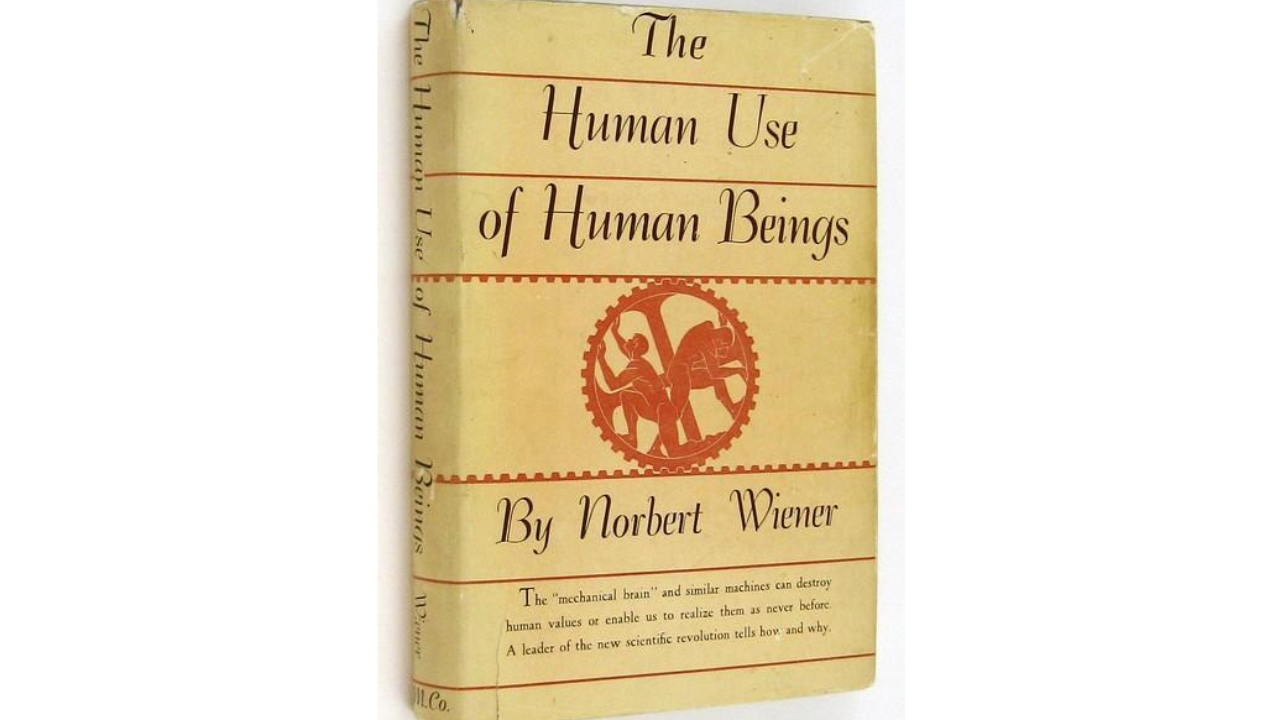

Norbert Wiener was one of the most unusual mathematicians of the 20th century: a child prodigy who graduated from Tufts at 14, earned a Harvard PhD at 18, and spent most of his career at MIT. During World War II, his work on anti-aircraft fire-control problems helped lead him to the idea of cybernetics, the study of control and communication in animals and machines. In 1950, he published The Human Use of Human Beings, a short book for general readers that warned that machines would not simply help humans, but could also begin shaping work, judgment, and social life in ways people did not fully control.

Norbert Wiener’s early life and the road to MIT

Wiener was born in 1894 in Columbia, Missouri, and quickly showed extraordinary ability in mathematics and logic. He entered Tufts early, completed his degree at 14, and later moved through Harvard and Cornell before joining MIT in 1919, where he remained for the rest of his career. Britannica and MIT sources describe him as a foundational figure in cybernetics, a field that later influenced control theory, communications, computer science, and artificial intelligence.

Norbert Wiener’s warning in The Human Use of Human Beings

During World War II, Norbert Wiener worked on anti-aircraft systems designed to predict the movement of enemy planes so guns could hit moving targets more accurately. While working on the problem, he became interested in how machines could process information, adapt to changing situations, and correct themselves through feedback. These ideas later formed the basis of Cybernetics: Or Control and Communication in the Animal and the Machine, published in 1948, which became one of the foundational works behind modern computing, automation, and artificial intelligence.Two years later, Wiener wrote The Human Use of Human Beings for ordinary readers, not just scientists. In the book, he explained that machines can be very useful, but they only do exactly what humans tell them to do. That sounds helpful at first, but Wiener warned that a machine does not understand common sense, fairness, or context. It follows instructions literally, even when the result is foolish or harmful. He also pointed out that people might slowly begin trusting machines more than their own judgment because machines can seem faster, cheaper, and more efficient. In the long run, he feared this could change the job market, reduce the need for human decision-making, and give too much power to systems that value efficiency over human judgment.

Why the book still feels modern

Wiener was not anti-technology. He believed machines could serve human purposes, but only if people remained responsible for the goals they set. His warning was that human institutions would increasingly rely on systems they did not fully understand, and that this dependence could weaken human agency over time. That is why the book now reads less like a period piece and more like an early guide to the problems now discussed under AI alignment, automation, and algorithmic control. MIT Press has even described the later edition of the book as prescient about many contemporary dilemmas surrounding AI technology.

Wiener’s legacy

Wiener died in 1964, long before personal computers, the Internet, or modern generative AI. Yet his work still matters because he asked the questions that now sit at the center of the AI debate: who controls the machine, what goals is it optimizing for, and what happens when people stop thinking for themselves? That makes The Human Use of Human Beings one of the earliest and most durable warnings about the ethical risks of intelligent systems.