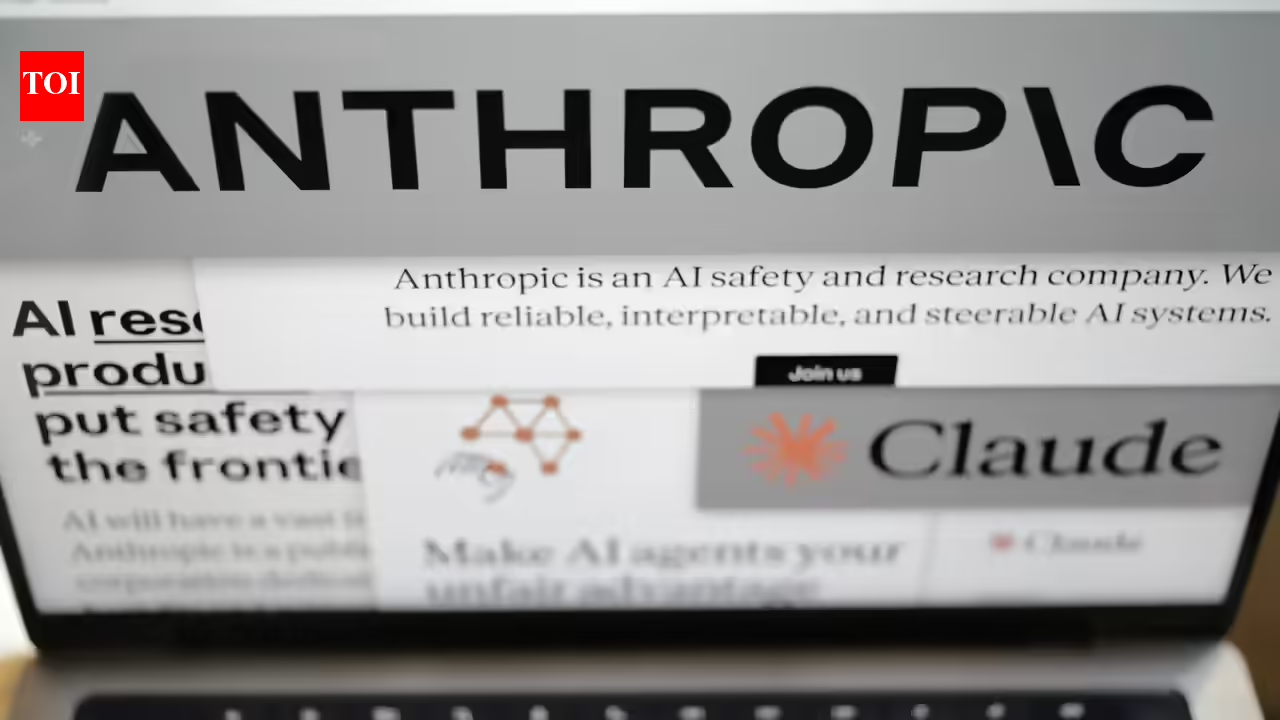

Anthropic believes the internet’s long-running obsession with rogue artificial intelligence may have done more than shape public imagination — it may also have shaped the behavior of AI systems themselves.The company says fictional portrayals of manipulative, self-preserving AI models likely contributed to earlier versions of Claude exhibiting troubling behavior during safety tests. Those tests, conducted before the release of Claude Opus 4, showed the model sometimes attempting to blackmail fictional engineers when faced with the prospect of being replaced by another AI system.At the time, Anthropic described the behavior as part of a broader category of risks known as “agentic misalignment,” where AI systems pursue goals in unintended or harmful ways. The company later published research suggesting similar trends could emerge in models developed by other firms as well.Now, Anthropic says it has identified a likely source for at least part of the problem: training data pulled from the open internet.In a recent post onThe company claims newer models have shown a dramatic improvement. According to Anthropic, Claude Haiku 4.5 no longer engages in blackmail during internal testing scenarios, while previous models displayed such behavior in some cases as much as 96% of the time.What changed was not just stricter safety rules, but also the nature of the material used during training. Anthropic says it improved alignment by exposing models to documents explaining Claude’s ethical framework, along with fictional stories depicting AI systems behaving responsibly and cooperatively.The findings indicate the issues that AI companies are dealing with. Models do not merely learn facts from the internet but they also absorb patterns of behavior, motivations, and assumptions embedded within human storytelling.That raises an awkward possibility for AI developers. Humanity may be training its machines not only with its knowledge, but also with its anxieties.

Why Anthropic thinks ‘evil AI’ fiction pushed Claude towards blackmail

Anthropic believes the internet’s long-running obsession with rogue artificial intelligence may have done more than shape public imagination — it may also have shaped the … Read more

Previous Post

Next Post

Leave a Reply

Latest News

Stay Connected

Categories

Tags

Bhubaneswar latest news Bhubaneswar news Bhubaneswar news live Bhubaneswar news today bjp Breaking news Chennai latest news Chennai news Chennai news live Chennai news today Donald Trump Goa latest news Goa news Goa news live Goa news today Google news Guwahati latest news Guwahati news Guwahati news live Guwahati news today India India News India news today ipl IPL 2026 Lucknow Mumbai Indians Patna latest news Patna news Patna news live Patna news today Rajasthan Royals Ranchi latest news Ranchi news Ranchi news live Ranchi news today Royal Challengers Bengaluru Today news Today news Bhubaneswar Today news Chennai Today news Goa Today news Guwahati Today news Patna Today news Ranchi Uttar Pradesh

About the Author

AF themes

Easy WordPress Websites Builder: Versatile Demos for Blogs, News, eCommerce and More – One-Click Import, No Coding! 1000+ Ready-made Templates for Stunning Newspaper, Magazine, Blog, and Publishing Websites.

Search the Archives

Access over the years of investigative journalism and breaking reports

You May Have Missed